A tie between cyber journalists and bio-journalists has already occurred. – Three threats to journalism. – News story on earthquake and tectonic shifts. – Generative journalism. – Two arguments about “robots’ incapability”. – Road map for robot journalism. – Forecasts and suggestions.

A tie between cyber journalists and bio-journalists has already occurred

In May 2015, Scott Horsley, NPR White House correspondent and former business journalist, boldly challenged the WordSmith algorithm created by Automated Insights. “We wanted to know: How would NPR’s best stack up against the machine?” NPR wrote. As NPR is a radio network, a bio-journalist working for them should be very well trained for fast reporting. According to the rules of the competition, both competitors waited for Denny’s, the restaurant chain, to come out with an earnings report. Scott had an advantage, as he was a Denny’s regular. He even had a regular waitress there, Genevieve, who knew his favourite order: Moons Over My Hammy. It didn’t help… though, it depends on how the results are judged.

Scott Horsley competes with a robot. Source: An NPR Reporter Raced A Machine To Write A News Story. Who Won? NPR, May 29, 2015.

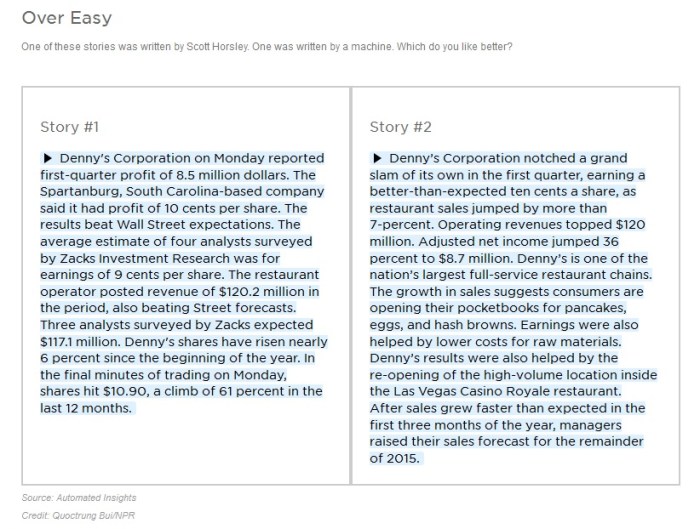

The robot completed the task in two minutes. It took Scott Horsley a bit more than seven minutes to finish. NPR published both news pieces to offer readers a sort of journalistic «Turing test». Can you identify which piece was written by a robot and which one by a human?

Journalistic “Turing test”. Source: An NPR Reporter Raced A Machine To Write A News Story. Who Won? NPR, May 29, 2015.

The left piece was written by the robot. The figure density is higher, and its style is drier. In the meantime, Scott added a bit of unnecessary information to his version of the financial report, for instance, with this sentence: “the growth in sales suggests consumers are opening their pocketbooks for pancakes, eggs and hash browns.”

Technically, the robot’s vocabulary is larger, as it includes the entire language’s vocabulary. But the robot has to use the most relevant, most conventional, i.e. most frequent words, and that eventually dries up its style. Moreover, the robot’s vocabulary is limited by industrial specialization. To give an example, a robot would never use cooking or sports vocabulary, such as “grand slam,” in a financial report. Humans are the opposite. A human writer is not limited by word relevancy or frequency, and has the freedom to use the rarest and most colourful words, which broadens context and brings vividness. Moreover, an original, unconventional style of writing is what really makes a human a writer. Robots simply don’t feel a need for originality to complete a financial report.

“But that could change,” NPR suggests. If the owner «feeds» WordSmith more versatile NPR stories and modifies the algorithm a little bit, this kind of redesign could broaden WordSmith’s vocabulary. Such things are modifiable.

So who won the competition? The robot wrote faster and in a more business-like fashion. Scott Horsley was more “human-like” (surprise!) but slower. The target audience of this writing is people in the financial industry. Is the lyrical digression about wallets and pancakes valuable to them? As long as the readers are humans, not other robots, it might be.

Ultimately, it is a tie. Although two minutes against seven… For radio and for the financial news market, this difference may be critical.

Academics staged a competition between a horse and a steam engine, too. Christer Clerwall, a media and communications professor from Karlstad, Sweden, asked 46 students to read two reports. One of them was written by a robot and another by a human. The human news story was shortened to the size of the robot one. The robot news story was slightly edited by a human so that its headline, lead, and first paragraphs looked similar to what is usually done by editors in the media. Students were to evaluate the stories on certain criteria such as objectivity, trust, accuracy, boringness, interest, clarity, pleasure to read, usefulness, coherence, etc.

The results showed that one of the news stories led in certain parameters, and the other one excelled in others. The human story topped such categories as «well-written», «pleasant to read», etc. The robot news story won the categories «objectivity», «clear description», «accuracy», etc. So humans and robots tied again.

But the most important thing the Swedish study revealed is that the differences between the average text of a human writer and a cyber-journalist are marginal. This is a critical factor in assessing the future and even the present of robot journalism. Cyber-sceptics always say robots can’t write better than humans. But this is a false way to approach the issue. “Maybe it doesn’t have to be better – how about ‘a good enough story’?” professor Clerwall tells Wired.

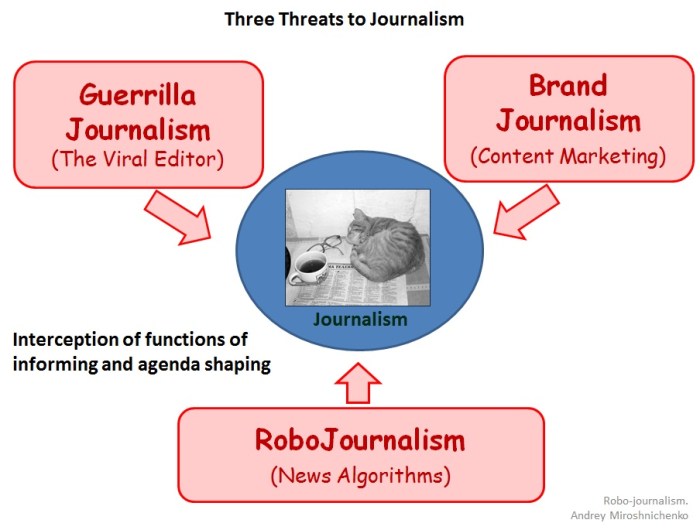

Three threats to journalism

The internet emancipated personal authorship. Millions of people inform each other about everything on the planet. The scariest thing is that driven by huge enthusiasm, people do it for free. Yes, there is a lot of rubbish on the internet, but everyone consumes information that is carefully selected to suit their interests. Content on the Web is not filtered before publication; it is filtered after, when it’s distributed, thanks to the Viral Editor. As a result of that, legacy media lose monopoly in shaping the news agenda. It’s not only about the death of newspapers, which is by the way inevitable. The internet is a threat to old media not only because of the shift from print to digital, but also due to the audience’s involvement in authorship.

Another threat to traditional journalism comes from corporate media and content marketing. Corporations also became authors, which means they are less and less dependent on traditional media as mediators. Corporations can communicate on their own. The brand itself is media now.

Content created by amateur authors, or bloggers, is improving thanks to cooperation (that’s the Viral Editor). Corporations, on the other hand, improve their media output thanks to competition for public attention. In the course of a media “arms race,” corporations win over professionals from media companies; they use innovation and, most importantly, shift from direct advertising to social themes. Brands need the audience; advertising only wastes the audience, while content marketing is capable of gathering it. Although wide circles of the public do not really notice these processes, in fact corporate media activity endangers traditional media as much as the blogosphere does.

Still, the blogosphere and corporate journalism are both activities carried out by humans. But poor journalism is now facing a third threat, a soulless and inhuman one. Old media lose readers because of the blogosphere, and they lose advertising because of corporations, and their suffering extends even further. People in the media are going to lose their professions because of writing algorithms, the third threat.

Tired of competing with the digital, editors perceive the algorithm threat with anxiety or, sometimes, rejection. Those more familiar with the issue usually say, “Okay, someday robots will write sports news, financial analytics, and weather reports. But they are incapable of anything else.”

That is a wrong take. Robots are already writing news about weather, sports, and finance, and on a big scale; it is already not about the future. The answer to the question of whether robots are capable of anything else is “yes”.

News story on earthquake and tectonic shift

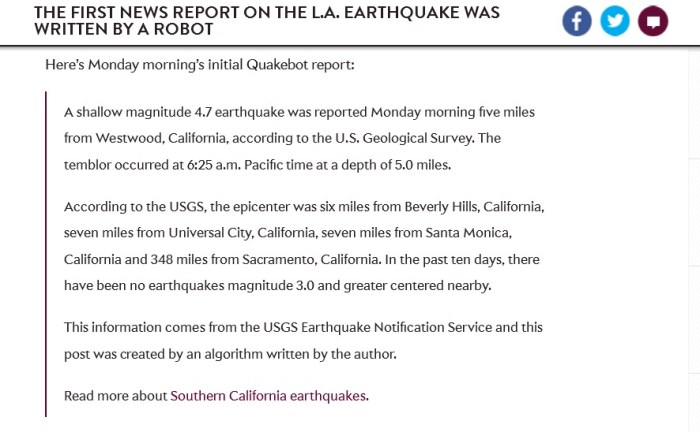

The following story became a piece of journalism history. On March 17, 2014, at 6:25 in the morning, journalist and programmer of The Los Angeles Times Ken Schwencke was jolted awake by an earth tremor. He rushed to his PC, where a news story already written by Quakebot algorithm had already been waiting for him in the editorial system. Ken skimmed through the report and pressed “Publish”. Thus, LAT became the first media to report about the earthquake – three minutes after the tremor. Robo-journalist outruns its bio-colleagues.

First earthquake report written by a robot. Source: The First News Report on the L.A. Earthquake Was Written by a Robot. By Will Oremus. Slate, March 17, 2014

This LAT report was published an hour after the second tremor. It’s signed by Schwencke, but in the end, there is an addition: “this post was created by an algorithm written by the author.”

Quakebot Algorithm, written by Ken Schwencke, has been around for two years. Quakebot is connected to the U.S. Geological Survey. It takes data directly from there – place, time, magnitude. It compares this data with previous earthquakes in this area and defines “the historic significance” of the event. The data is then inserted into a suitable pattern, and the news report is ready. The robot uploads it into the editorial system, and sends a note to the editor. This report is far from being worthy of a Pulitzer, but it allows an editor to publish news minutes after it happens. Needless to say, earthquakes are a hot news item in LA. There is a special “Earthquakes” section of the LAT website, which is filled up by a trainee robot-correspondent.

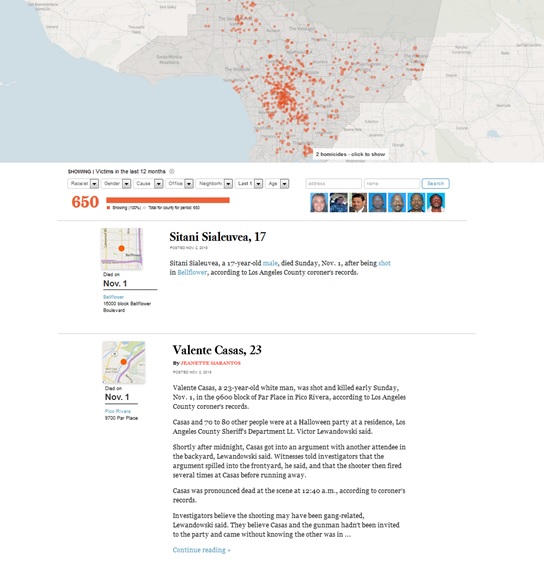

Another robot is responsible for the criminal chronicles in The Los Angeles Times. This robot has been doing the Homicide Report since 2007. As long as a coroner adds info into the base on, for instance, a violent death, the robot draws all available data, places it on the map, categorizes it by race, gender, cause of death, police involvement, etc., and publishes a report online. Then, if it’s worth it, a human journalist gathers more info and writes an extended criminal news piece. If it’s not worth it, all that appears on the website is the robot’s report.

The Homicide Report Map of Violent Deaths and Criminal Chronicle, The Los Angeles Times.

Media critics point to the way criminal coverage has been changed by robot criminal reporters. It used to be that journalists covered only the murders with the highest resonance potential. But now robots cover absolutely all murders. Consideration of statistics on all killings illustrates the density of murders in specific districts on a map, as a whole and in specific categories such as gender and race. The visualized statistics generate “secondary” content, which is missed in human reporting. The map of killings calculated by the robots has additional value as well, for instance for the real estate market.

It is worth adding that a criminal reporting robot covers a territory of 10 million people – it’s comparable to the population of Sweden or Portugal. Certainly, a bio-journalist can’t make instant statistical calculations on such a scale.

Analysis of such cases by media critics suggests that robots help journalists gather facts and offer initial processing of data. Journalists then have more time for creativity. This is true. A criminal reporting robot doesn’t write at all, and neither does Quakebot, which just uses phrase samples and patterns. They are good helpers for humans, that’s it.

The cases of financial and sports journalism are a bit more complicated.

Generative journalism

Here is a news story written by a WordSmith algorithm and published by the Associated Press (you don’t have to read it; just look at the quality of reporting).

Alcoa swings to 2Q profit of $138M, revenue flat

Jul. 8, 2014 10:32 PM EDT

NEW YORK (AP) — Alcoa Inc. (AA) on Tuesday reported a second-quarter profit of $138 million, reversing a year-ago loss, and the results beat analysts’ expectations.

The company reported strong results in its engineered-products business, which makes parts for industrial customers, while looking to cut costs in its aluminum-smelting segment.

Alcoa has struggled in recent years with low aluminum prices and has increased its focus on making sheets and other products for manufacturers of airplanes and autos, who value aluminum for its light weight. Two weeks ago, Alcoa raised its bet on the finished-goods side of the business by announcing the $2.85 billion acquisition of British jet engine component maker Firth Rixson.

CEO Klaus Kleinfeld said the latest results show that the strategy is working. “Our transformation is continuing, and we are changing the portfolio,” he said on a conference call with analysts.

Alcoa has been closing smelters to reduce capacity in the older side of its business. Even there, however, results improved as the average price per ton of aluminum climbed 2.4 percent from a year earlier.

The company left unchanged its forecast of a 7 percent increase in aluminum demand this year.

Alcoa said second-quarter net income was 12 cents per share. In the year-ago quarter it lost $119 million, or 11 cents per share.

Excluding costs for closing a smelter and mills and other special items, the company earned 18 cents per share. The average estimate of analysts surveyed by Zacks Investment Research was 13 cents per share.

The refiner and producer of aluminum and products made from aluminum, nickel and titanium reported revenue of $5.84 billion compared with $5.85 billion in the same quarter a year ago. That beat Wall Street forecasts of $5.63 billion, according to Zacks.

Before the report, Alcoa shares rose 11 cents to close at $14.85. They were up another 16 cents, to $15.01, during after-hours trading.

The shares have risen 40 percent since the beginning of the year, while the Standard & Poor’s 500 index has climbed 6.2 percent. The shares have climbed $7.04, or 90 percent, in the last 12 months.

This news report was compiled within less than a second. The robot seized the facts of the report, made necessary market dynamics comparisons, and generated a rather profound and reasonably coherent text.

It’s even more astonishing that in 2014, the Associated Press published 3,000 WordSmith news stories in one quarter. It’s about ten times more than AP journalists used to write within the same time period. By the way, robots are already conquering the financial news market in the world’s leading media. Another, let’s call it, generative journalism market leader, Narrative Science, supplies its Quill robot service to Forbes.

Let’s look at sports reporting, to see what’s going on there. Here is a fragment of a children’s baseball league game report, written by the Stats Monkey algorithm, a creation of Narrative Science:

Friona fell 10-8 to Boys Ranch in five innings on Monday at Friona despite racking up seven hits and eight runs. Friona was led by a flawless day at the dish by Hunter Sundre, who went 2-2 against Boys Ranch pitching. Sundre singled in the third inning and tripled in the fourth inning … Friona piled up the steals, swiping eight bags in all …

The Stats Monkey’s specific trait is that it uses baseball slang. That’s not all its benefits. Children’s game results are input by parents into a special iPhone app during the game. The fans, the little baseball players’ relatives, receive a broad report about the match even before competitors finish shaking hands at the field. It goes without saying that such reports, no matter their style, are much more important to these fans than a Super Cup report.

In 2011, an algorithm wrote 400,000 reports for the children’s league. In 2012 – 1,5 million. For a reference, that year there were 35,000 journalists in the USA. They wouldn’t be willing to cover Little League games, regardless of how much they could have been offered for doing so. That’s another aspect of robot journalism – algorithms can cover those areas of journalism that are skipped by human reporters due to «low newsworthiness».

| BTW Digitalization of sports itself opens new horizons for robot sports journalism. As stated by Steven Levy back in 2012, in his article for Wired, sports leagues have covered every inch of the field and each player with cameras and tags. Computers gather all possible data that one can imagine, such as ball speed, distance of throw, rest of leg, altitude – all possible telemetry. A well-trained robot can spot a weakened pitcher’s throw, or that a player started to lean left before the batsman made a victory strike. Is this information important? It is, but a human reporter wouldn’t notice it. Old sports journalism can’t do it, just like the old criminal reporting lacks interactive maps of murder density. |

In other words, robots have already surpassed human reporters in data journalism, in speed and in scale of news coverage. But can they beat humans in writing style?

Two arguments of “robots’ incapability”

The main argument against the future dominance of robots is about machines’ inability to create. There are two main ideas with regard to that: a robot can’t invent like a human, and a robot can’t write like a human. Let’s examine them.

1) A robot can’t invent.

Yes. Certainly, serendipity is a human experience. A human can stumble upon accidental inventions or discoveries, for no reason (like when an apple falls down on one’s head), like a brainwave. There is a similar phenomenon of «eureka», a sudden irradiation, which is hard to describe in logical terms. That’s why the creative splash will always remain a human prerogative. There is a human ability to be proactive, and humans thrive on creativity, eureka moments, and serendipity. A robot’s actions, on the other hand, are predetermined by an algorithm (as we see it today).

That’s why sceptics would say that robots won’t be able, for example, to determine sensation in a row of same-type events, as a human editor does. And, moreover, a robot won’t be able to decide to exaggerate an ordinary event to the scale of a sensation, as editors do by intentionally picking an event from a row.

But. What if, in turn, robots can do something humans can’t? First of all, it’s about the cross-analysis of large amounts of data of any size, and identifying correlations. For instance, cross-analysis of the consumer and political preferences of red-car owners may reveal that they voted for Bush. A human would most likely be knocked out by trying to find and explain such correlations. It turns out that cause-and-effect relations that slow human journalism down are not often necessary in a world of Big Data. Robots have opportunities to find fantastic correlations that are sometimes highly important for marketing, politics, and media. The world is full of them, and bio-journalists are just unable to see them.

What if an algorithm’s ability to come up with correlations compensates and even replaces human serendipity such as in the eureka moments, and even the sense of humour? Facts derived from Big Data may be as interesting and irrational as outcomes of human creativity.

2) Robots don’t have a sense of style.

Yes. It’s true, robots don’t aim to write in a beautiful manner, and even if they had such a goal, what would be defined as «beauty»? What is that?

But. If it is impossible to calculate beauty, it is possible to calculate human reactions to it. Humans themselves can serve as a measurer of beauty for robots. Imagine, all texts and human reactions to them, such as likes, shares, comments, and click-throughs are gathered to the robot journalist’s database. Even today, robots are able to identify the attractiveness of headlines, topics, key words, etc., by observing people’s reactions. Editors guess, robots know. And now let’s imagine that with the help of biometrics (eye tracking already allows for this), robots are able to analyze human physiological reactions to certain semantic and idiomatic expressions, epithets, syntax constructions, and visual images. Such technologies are getting more and more affordable; the question is only around the amount of data and the processing speed.

Such systems can automatically produce more attractive headlines and texts. But other kinds of problems may arise: 1) attractiveness could become a white noise; 2) the chase for human reactions could make generative journalism degenerative. But as long as it is a period of transition, it is important that analysis of human reactions can compensate for the lack of senses in robots.

In other words, for every argument on what robots can’t do, there is a more convincing argument for what humans can’t do. In this competition of capabilities, robots and humans also end up in a tie.

The competition has just begun, but it’s a tie already.

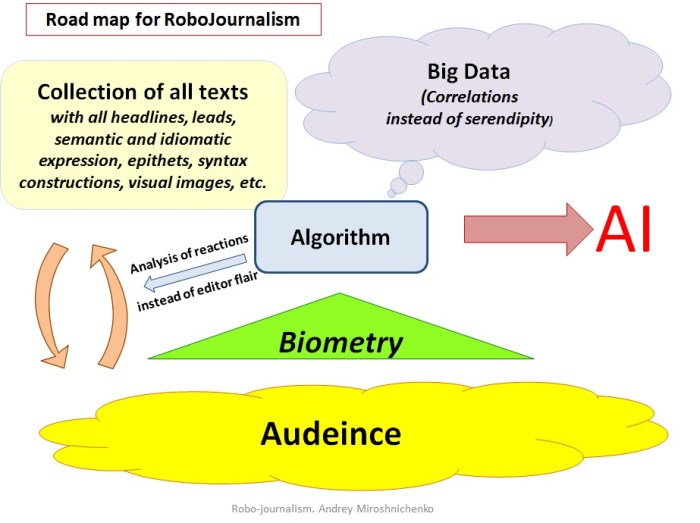

Roadmap for robot journalism

Based on what is already known about robot journalists, one can imagine how they are going to conquer the world. Or occupy the journalistic profession, for a start. Here are the key points on how robot journalism is going to operate in the future.

1) Big Data. Algorithms are to develop a way to manage big data. Their ability to find correlations will in some ways replace human creativity. The best human minds are working on it now. These human minds don’t care that much about saving journalism. “If there is a free press, journalists are no longer in charge of it. Engineers who rarely think about journalism or cultural impact or democratic responsibility are making decisions every day that shape how news is created and disseminated,” said Emily Bell, professor at the Columbia Journalism School, in her speech with the title speaking for itself, “Silicon Valley and Journalism: Make up or Break up?”

2) Database of all texts and analysis of audience reactions. By monitoring, gathering, and analyzing all journalistic texts and people’s reactions to them, algorithms can easily calculate which texts with which parameters gathered more likes, reads, reposts, and comments.

There might be some niches left for only humans to perform journalism. These will be the preservation area for bio-columnists. But on an industry scale, these spots that robots can’t cover won’t make any difference. Furthermore, the share of human-made journalism will more and more decrease because of the acceleration of overall growth of content, produced by robots.

3) Biometrics. Once robots get access to human non-verbal reactions and body language, they will be able to calculate inexplicit reactions instantly. Imagine someone reading a story about Trump: the touchscreen senses hands sweating, the webcam fixates on dilated pupils, the microphone hears an increase in breathing frequency… This technique can replace the editor’s intuition. Moreover, being applied to millions, such a technology will become the biggest lie detector in history.

Technology is replacing humans with algorithms at a high speed. Why should they stop developing? It’s an open highway, leading to artificial intelligence, by the way.

Forecasts and suggestions

Co-founder and CTO of Narrative Science Kristian Hammond thinks 90% of the news could be written by computers by 2030. Hammond also thinks a computer could write a story worth a Pulitzer Prize by 2017.

I would add that we are going through both a qualitative and quantitative competition with cyber-colleagues. In the quantitative contest, bio-journalists lose already. We are to lose in the qualitative competition in 5-7 years.

It is interesting that at early stages of transition from human journalism to robot journalism, editors will be the ones to kill the profession. Newsrooms have to produce as much content as possible to increase traffic. A journalist may simply not have time for serious topics, but s/he has to run more and more stories for the website. It’s motion for motion’s sake. Journalism theorist Dean Starkman called this effect the “hamsterization of journalism”, or the Hamster Wheel. The hamsterization of journalism reduces time spent by the journalist on each story in order to produce more stories: “do more with less”.

Let’s imagine that a good article, which means good journalism, can attract thousands of readers. But what if a thousand news stories written over the same time period were able to attract a hundred readers each? Actually, when traffic is king, editors don’t need the best journalists; they need fast journalists… Whom will the editor choose—a capricious journalist with increasing salary demands and three stories a week, or a faultless algorithm with decreasing maintenance costs and three stories a minute?

The Associated Press buys the WordSmith service not because the algorithm writes better than humans do. The reason is that the algorithm writes more and faster. Debates about text quality are not relevant. Robots will conquer newsrooms not for belletristic reasons, but for economic ones.

The Associated Press buys the WordSmith service not because the algorithm writes better than humans do. The reason is that the algorithm writes more and faster. Debates about text quality are not relevant. Robots will conquer newsrooms not for belletristic reasons, but for economic ones.

Thus, the robots’ advent is unstoppable. Under these conditions, the most beneficial strategy for newsrooms is to be the first ones at the beginning of robotization, and the last ones at the end of it.

Now, the editorial use of algorithms that generate texts could be an interesting PR-strategy, attractive for both audiences and investors. But when algorithms fill the market, the rare human voice will be in demand amid robots’ metal squeak.

In this sense, as strange as it could be, human journalism will be valued at the final stages of robotization of media. Moreover, the editorial mistake will be particularly valued and attractive. That’s going to happen at least until robots learn to simulate editorial mistakes, too.

In 2014, Wordsmith, one of the two most powerful news-writing algorithms, wrote and published 1 billion news stories. It may be comparable or even more than what bio-journalists wrote and published. Part of this “billion” was meant to increase the physical volume of content. The other part already was to replace human writing.

The market is going to demand more and more. Nothing can stop robots from writing as much as they are required to, since the only limit for them is the volume that people can read. And even this limit will be removed, once the readers are also robots.

See also books by Andrey Mir:

- Digital Future in the Rearview Mirror: Jaspers’ Axial Age and Logan’s Alphabet Effect (2024)

- Postjournalism and the death of newspapers. The media after Trump: manufacturing anger and polarization (2020)

- Human as media. The emancipation of authorship (2014)

Categories: Content marketing, Corporate communication, Decline of newspapers, Future of journalism, Viral Editor

Leave a comment